Being a Google enthusiast, I am following Google conferences and events ever since Google IO has been started. Google has transformed from just being a search engine to many other offerings that we see today.

Madebygoogle is one of those different offerings that focus on google manufactured hardware and direct consumer appliances as a whole.

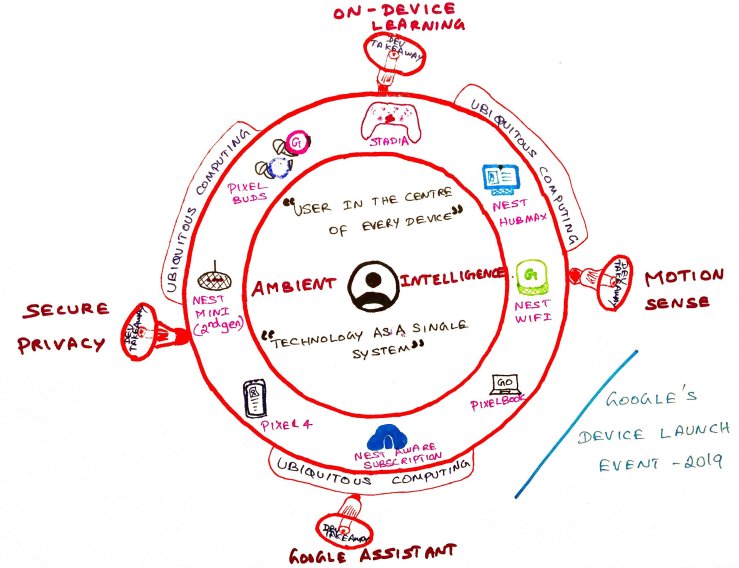

Google’s philosophy and building blocks on these devices are –

1) Unified user experience across the range of hardware they offer

2) Seamless transition of user experience across all of the devices

This was evident in the launch event (October 2019) as they kept on insisting the below –

1) User in the center of every system they design

2) Technology as a single system rather than separate elements for different systems they build

So what are they trying to achieve?

1) Ubiquitous computing meaning from the internet –

“Ubiquitous computing is an advanced computing concept where computing is made to appear everywhere and anywhere. In contrast to desktop computing, ubiquitous computing can occur using any device, in any location, and in any format.”

2) Ambient intelligence meaning from the internet –

“Ambient intelligence (AmI) is the element of a pervasive computing environment that enables it to interact with and respond appropriately to the humans in that environment.”

When compared to its rivals like Amazon, Microsoft, Apple, etc., Google was not a hardware company and it reflects in the revenue that was brought into the company through the hardware sales. And with the latest product line ups, Google is bringing ‘Ambient computing’ to the user, where connected devices are always listening and helping the user by understanding the context from the user perspective.

So what are the key take away for the developer community from Google’s product launch event – 2019 –

1) Motion sense and Face ID – Adding bio-metric authentication and gesture navigation are the 2 main features that any developer would want in their application for better user experience as they simplify app usage.

However, with Motion sense, Google has made it clear that they are not opening up the motion sense API’s for public use. Google has it on their apps and some of the 3rd party apps. But how google would let their developers use the motion sense API is a wait and watch for now.

FaceID is being supported through BiometricPrompt that was introduced from Android 9 and above. Also with Android 10, google introduced BiometricsManager that developers can use to query the availability of biometric authentication. Based on the result, appropriate BiometricPrompt can be used to show the user. For security reasons, google recommends OEM’s to have a strong IAR(Imposter accept rate) and SAR(Spoof accept rate) to participate in the BiometricPrompt class.

Below is an example of attacks as mentioned in the google site –

More info here – https://source.android.com/security/biometric

2) On-device learning and processing – Google demonstrated the importance of on-device learning and on-device processing in last year’s IO. To achieve low latency and a quicker response on device capabilities are highly used by Google in their apps. For example, Federated Learning (FL) is actively used in Google’s keyboard and search app. Not just this, with the introduction of CCPA, federated learning helps by not sending the secure user data to a centralized server for machine learning.

What are the different things that developers should know to achieve this in their app –

1) TensorFlow Lite – With no experience in ML, you can discover a library of pre-trained models that are ready to use in your apps, or customize to your needs.

TensorFlow Lite consists of two main components:

- The TensorFlow Lite interpreter, which runs specially optimized models on many different hardware types, including mobile phones, embedded Linux devices, and micro-controllers.

- The TensorFlow Lite converter, which converts TensorFlow models into an efficient form for use by the interpreter, and can introduce optimizations to improve binary size and performance.

More info here – https://www.tensorflow.org/lite/guide

2) TensorFlow Federated – TensorFlow Federated (TFF) is an open-source framework for experimenting with machine learning and other computations on decentralized data. It enables many participating clients to train shared ML models while keeping their data locally.

TFF’s interfaces are organized into two layers:

- Federated Learning (FL) API – High-level interface to view and evaluate the trained tensor flow models.

- Federated Core (FC) API – Low-level interface to communicate with the distributed systems.

More info here – https://www.tensorflow.org/federated

3) Better assistant – Actions on google is available for quite a sometime now. While many were trying to use DialogFlow, developers should also know other options of google assistant development like –

1) Extending the existing mobile app to access the app directly from the assistant using deep-links.

2) Enhance your web app using markup or templates by extending to google search and assistant.

3) Use intents to connect with smart home devices. The home graph is an important aspect to understand and take actions based on the context.

4) Create a conversational app from scratch using DialogFlow.

More info here – https://developers.google.com/assistant

4) Securing the privacy – Smart home devices are kept in the living room, bedroom, kitchen, etc. Smartphones and wearable have information about the user’s day to day activities. All of these devices have very personal data of the user. Securing the privacy of the user is important. Also, as per CCPA, the app user has the right to view or delete whatever data that was collected from the user. Developers should have a standard framework to mark the personal data while it is collected and expose it back to the user while it is requested by them.

More info here – https://privacy.google.com/businesses/compliance/#!?modal_active=none

Happy learning!