Users across globe are getting ready for the next level of user experience and comfort – Voice.

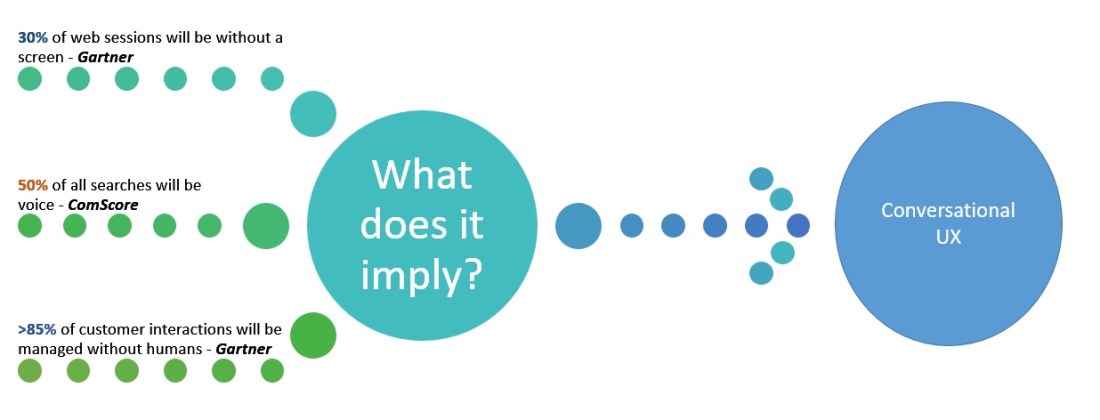

Below is a prediction from many analysts on how this new UX will be in 2020. As we transition from touching a screen to speaking to machines in a natural way, the new interface provides elimination of unwanted physical elements in existing technology. This transition is not just at the software level – this is going to impact the hardware as well.

All the factors lead to transitioning from touch to voice. Conversational UX is being thought as a new interface even at the Enterprise level. Voice biometrics is one more area that many security based companies are already exploring into. With some challenges on the aging factor with voice advanced ML tools are trying to understand and autocorrect this problem.

Amazon and Google have already started to compete in this space trying to establish as market leaders.

So, what to develop to address this new space in UX?

A conversational platform (Preferably, cross-platform) that can understand and process natural language

Why should the development interface be cross-platform?

Building one assistant which can run on multiple platform makes more sense than having individual assistant for every single platform. Also, training and log data that are generated from across platforms help in tweaking the assistant even smarter.

Do google have any platform to develop Conversational UX?

Dialogflow provides capabilities for human-computer interaction in a natural language way.

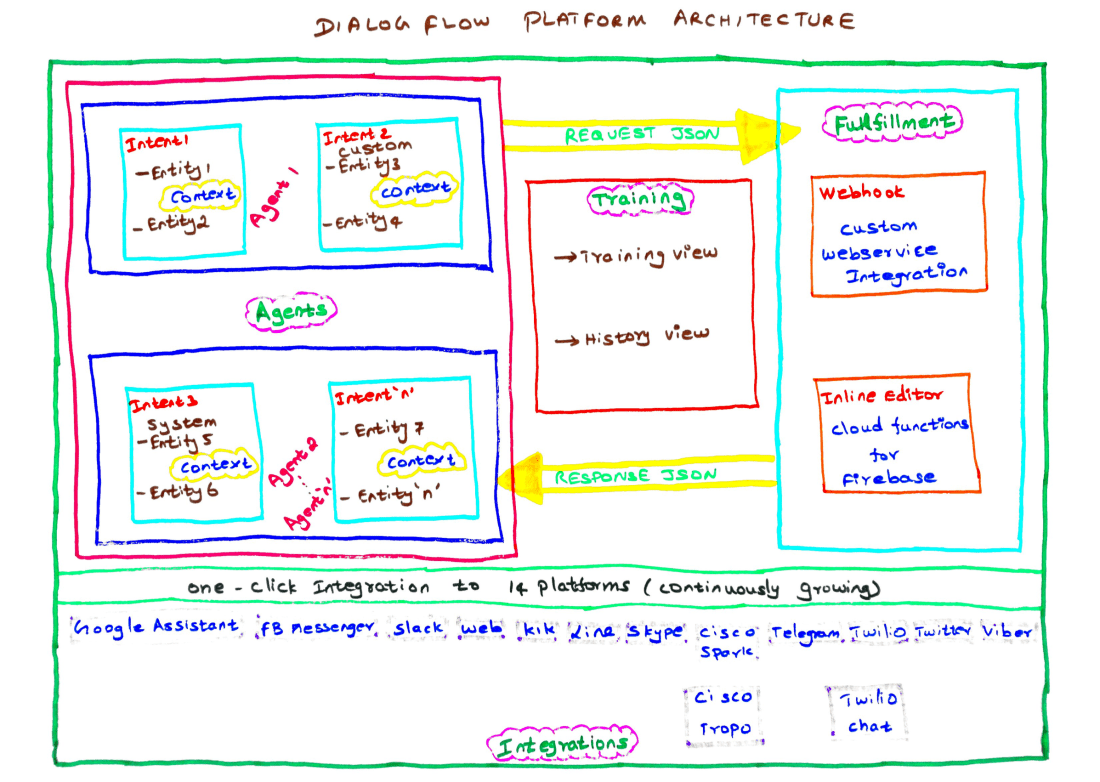

Let’s see in detail on the major components provided by dialogflow to design and develop conversations.

- Agents – These are NLP modules that run on different interfaces/hardware. Same agent can run on multiple interfaces. Agents with the help of intents converts the user request into actionable data. These data is later used to serve the user with appropriate response.

- Intents – Mapping between what the user says and what action the software has to take. It has four main parts –

- User says – Example of what user might say to invoke intent

- Action – Trigger word for the software to act

- Response – It can be of 2 types –

- Simple Text Responses

- Rich messages like Cards, Quick replies, etc

- Context – Bridging element across the entire conversation. Passes scope and content to subsequent conversations.

- Entities – ML/Human tools that help in deriving high level synonyms from natural language. Extracted values will be used as parameter values in building an intent. Entities are of 3 types

- System – Pre-built provided by dialog flow ML tools

- Developer – Custom defined by developers

- User – Entities that live for 30 mins in the session of user

- Training – History view in the training component lets the developer access all of the user says and the corresponding action software took. This helps in reassigning intents if needed. Also able to map the user queries which was not answered properly to the right intents.

- Fulfillment – Both (1) Webhook and (2) Firebase Cloud Functions helps in resolving core and complex user queries by hosting business logic through web-services.

- Integration – Agents built using dialogflow can be deployed to varieties of interfaces like telegram, actions on google, alexa, line, etc.

- Analytics – Usage and performance statistics of the agents can be monitored through analytics

Now, knowing all of the dialogflow components, below is the architecture on how the components fits in the platform.

To design, develop and deploy your agent in multiple interfaces using dialogflow is completely FREE.

Finally, chatbase(A separate analytical tool) provides a detailed analytics on the agents usage across multiple interfaces and also a flow diagram of the conversations. This helps in understanding the usage pattern of the agents.

To start developing your agent – click here.

Happy learning!