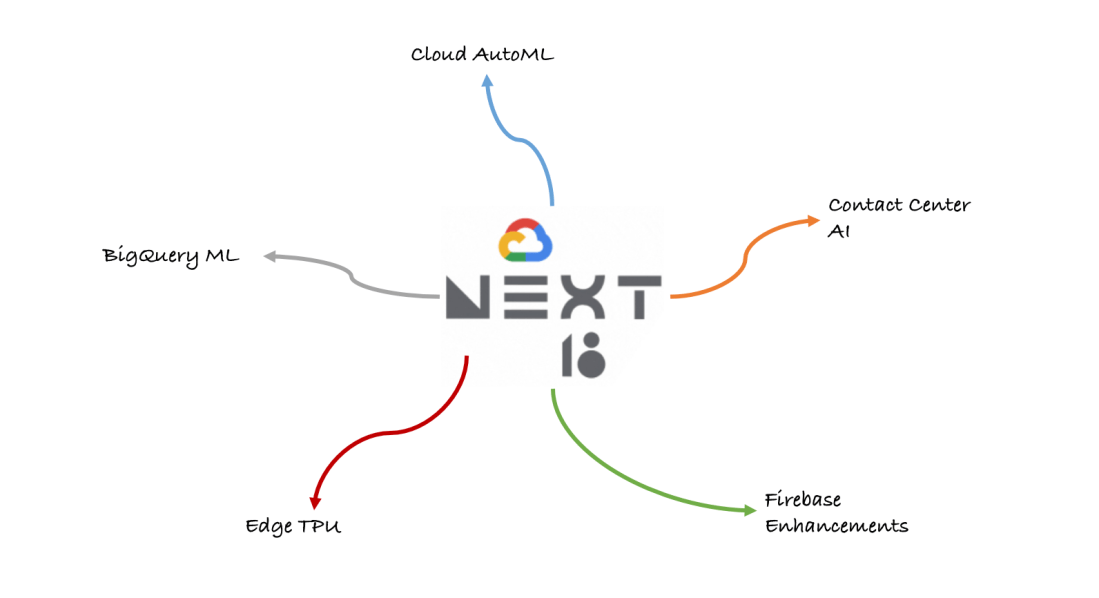

Google’s second edition of Google Cloud Next opened with some announcements on cloud platform. Google have made it clear on sticking to their commitment on AI first approach by adding AI to almost every product on their shelf.

As a developer we have come across this common scenarios of “It works fine in my system and not sure on why it doesn’t work the same in some other system”. Google seems to be addressing this problem by taking everything on cloud.

I want to highlight some announcements which interested me –

Auto ML – Quick and easy tool to train models with very less expertise on machine learning. Currently, there are 3 products available under AutoML.

1) AutoML Vision – If you have already used cloud vision API, you will realize that AutoML vision is an extension of cloud vision API. Once the uploaded images are labeled, vision takes care of training a model and also takes care of scaling based on the new training sample data. The model can be more trained by adding more photos to the training data set. However, Google says a sample of 10 photos is more than sufficient to get a pretty decent prediction.

2) AutoML Natural Language – Deriving context and also extracting information about people, places, events, etc based on the sample data fed to the system.

3) AutoML Translation – Custom translation models for translation needs. We have to upload translated language pairs. Google has a list of supported language pairs that can be utilized for AutoML translation. Once when this is done the custom trained models return translation result specific to your context and domain.

Contact Center AI – It helps improve the contact center experience for the customer by setting up a right contextual intuitive customer care experience. Google lets this happen by assisting in 2 ways –

1) Virtual agents

2) Agent assistance

First, google understands the context of the customer call. Based on the identified context, contact center AI suggests the relative action by seamless integration with the knowledge base. The interface determines which calls actually needs to be routed to the actual agent vs the virtual agent.

BigQuery ML – Enables the developers to create and execute machine learning models on BigQuery using standard SQL queries. This leads the data base SQL practitioners to implement machine learning with limited expertise on machine learning itself. Currently google supports only 2 models –

1) Linear regression – Used to predict a numerical value.

2) Binary logistic regression – Identifying and predicting scenarios with just yes or no.

One of the main advantage of BigQuery ML is that the data need not be moved from data warehouse and rather the ML comes to the data itself.

Edge TPU – Google’s custom built chip to run AI at the edge. Varieties of use cases can be run seamlessly without connecting to the cloud server. Almost no latency and high performance improvements towards processing at edge. Build tensor flow models in cloud and push it to edge TPU chip to run locally within the device.

Improvements to Firebase – Google shared an interesting fact that 43% of 1* ratings in play store mentions stability, bugs and errors while 73% of 5* reviews mention good design, usability, speed and stability. Improvements to existing products like Crashytics, Cloud Function are helping developers to bring their ideas to app rather than worrying about infrastructure setup and management.

I am excited about how google is bringing all of their products under AI first approach. Please share your thoughts on which product caught your interest in Google Cloud Next 2018.

Happy learning!

Hi Shankar,

Such an amazing article.

It’s very interesting to read more about how AI can be used in the industry.

You would love to see my advanced python Programming online classes in Pune site as well.

Thanks once again.

LikeLike