Internet of Things is gaining momentum at a very rapid speed. You can see many prototypes getting evolved on the same. However, we are still not there – Infrastructures needed to fully achieve IoT are not in place.

So, what are the major infrastructure changes required to achieve IoT?

1) Seamless connectivity

Always connected and anytime connected for 2-way data transfer

2) Reduction in latency

Less time for data transfer

3) Huge data processing and storage

Enormous data storage and high computational capability for data processing

We almost have all of the 3 that are mentioned above. Or big companies are working on getting the infrastructure in place. However, one of the main principles with IoT is NEAR ZERO LATENCY. This is one of the pillars which needs to be improved to achieve IoT. Here we are not talking about improving computations by adding more servers horizontally or vertically at scale. Rather this needs a new solution from the existing traditional cloud based architecture. Many companies like Amazon and Google are moving towards deploying AWS and GCP servers in a more distributed region than a single centralized global region.

This is a move from centralized cloud to distributed cloud. Even then it will not have a huge impact on improving latency. It might add more maintenance towards managing the distributed cloud.

Solutions should focus more on delegating the functionalities from cloud level to the down stream levels.

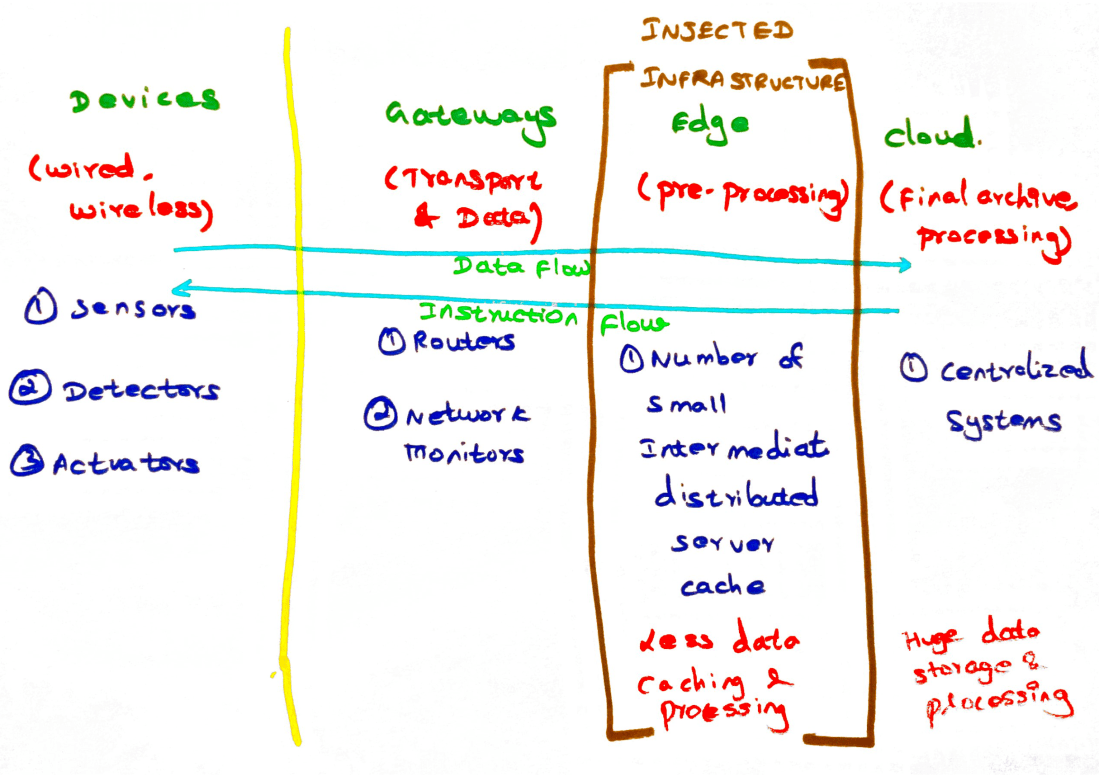

A simple solution could be leaving the cloud computing as such and bringing in intermediate distributed computing engines between IoT devices and cloud servers.

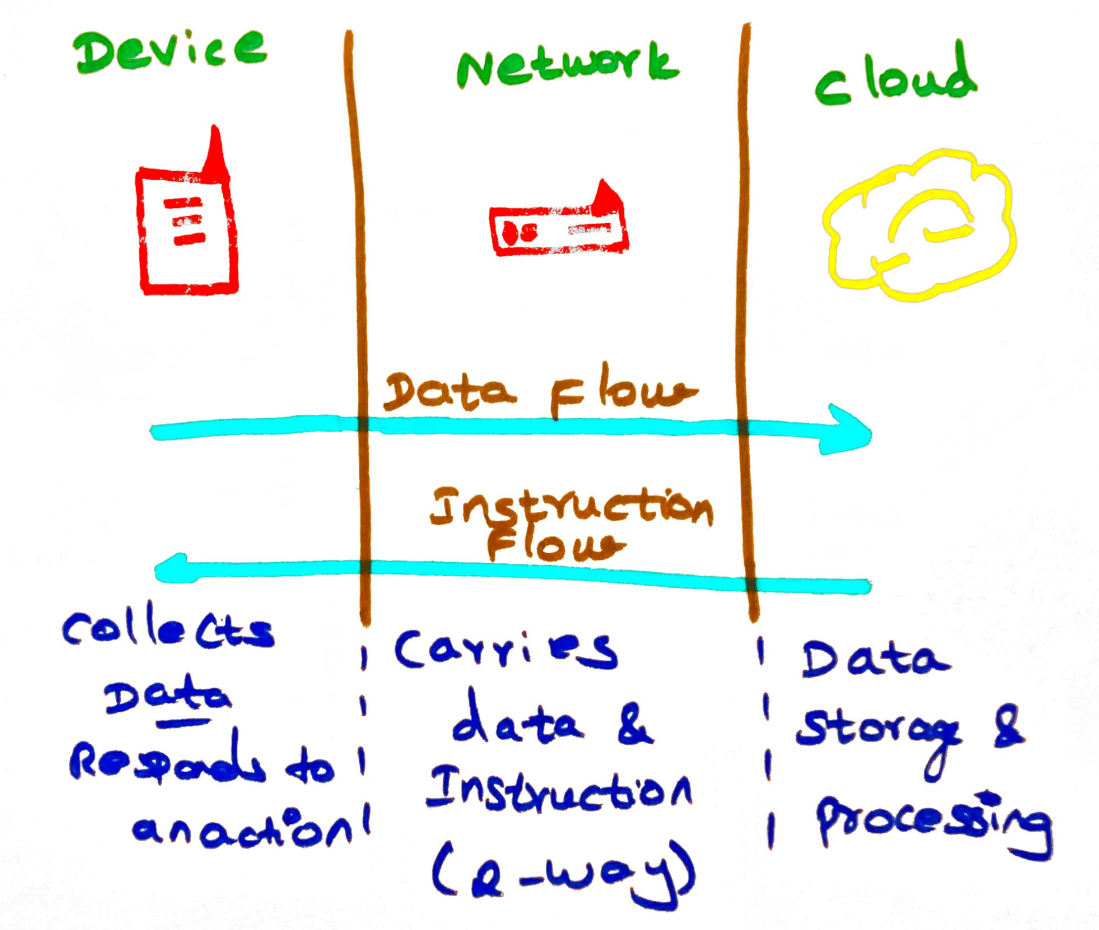

How does the current infrastructure look like?

Prototypes on IoT are built on top of the existing back-end cloud infrastructure.

What has to be injected to the existing infrastructure?

A server which can be made available near IoT devices itself and can respond to the devices fast and quick without any latency.

So, what is really helping in reducing the latency?

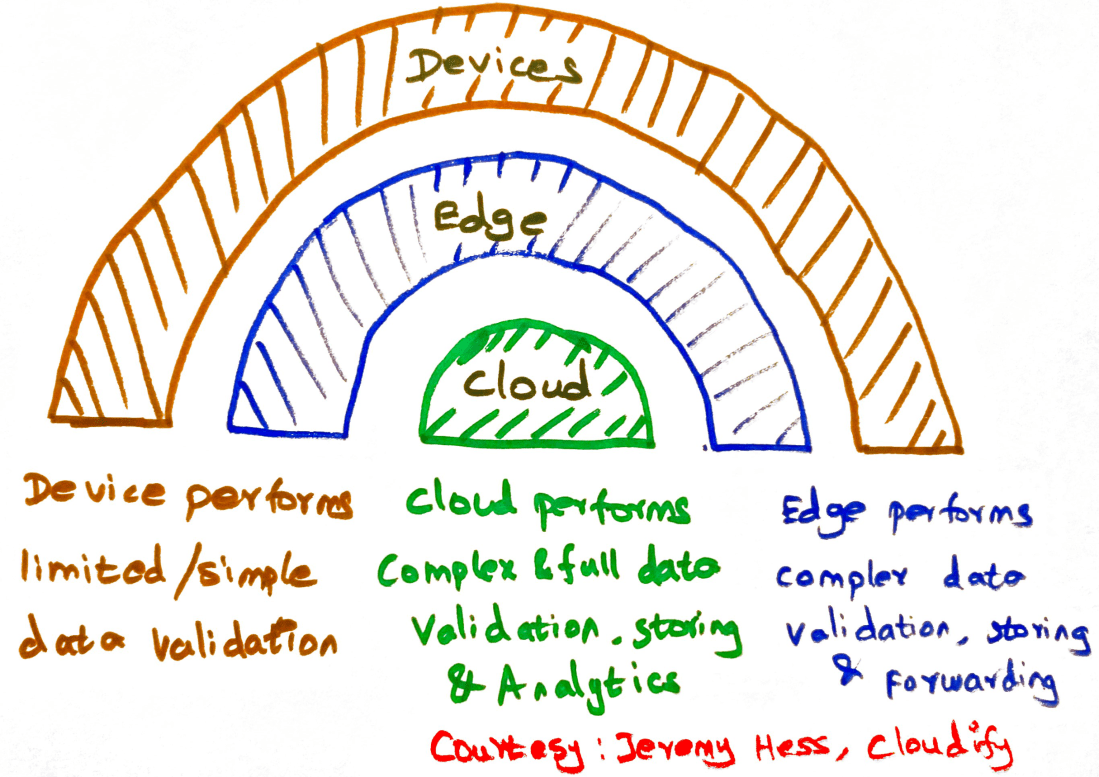

Edge Computing –

An intermediate computational engine that is placed between IoT devices and the cloud systems. This helps in pushing some of the validations to the Edge system that will reside at the end of the network. One suitable place could be putting the edge systems at RAN (Radio Active Network) towers. Not having to take a bigger round trip is the main purpose of edge computing. Unlike the usual cloud analytics that collects the data all the time and processes the data at a cloud data center, edge computing eliminates unwanted data transfer and enhances security and privacy of the user.

Edge Device –

IoT devices generate huge volume of data on a regular basis. Sometimes same redundant data might be generated. Edge devices are those which has some simple business rules and logic which determines what data has to be collected and what data has to be sent over the network for further processing. Edge devices can also be termed as smart devices which can over the period of time learn and respond at the device level with minimal interaction to the server.

Edge Analytics –

Analytics done over the device or gateways or at the edge servers are generally referred as edge analytics. This is mostly analytics performed on-site or at the edge of the network. Analytics performed can be predictive, diagnostic or descriptive. We should be cautious about when, where and how edge analytics is rolled out or performed. Please be aware there may be data loss at the centralized data center because of edge analytics. But there will be huge improvement in latency as things need not transfer data all the times over network to data center. Some advantage that I see over edge analytics is that less network traffic and no redundant data transfer or storage.

With more devices focused on IoT, Edge is sooner going to be the new standard of the back-end infrastructure.