The industry is moving away from the traditional, siloed development. It is adopting a more integrated approach often referred to as the Intelligent Development Lifecycle (IDLC). This is a huge shift in the mindset. Earlier digital operational models, from waterfall to agile, followed a sequence of steps. Each step was isolated, yet entirely dependent on the output of the phase before them. It followed a linear sequence of steps. This will all soon be legacy, and we will see transition toward new, highly collaborative working models.

What is IDLC?

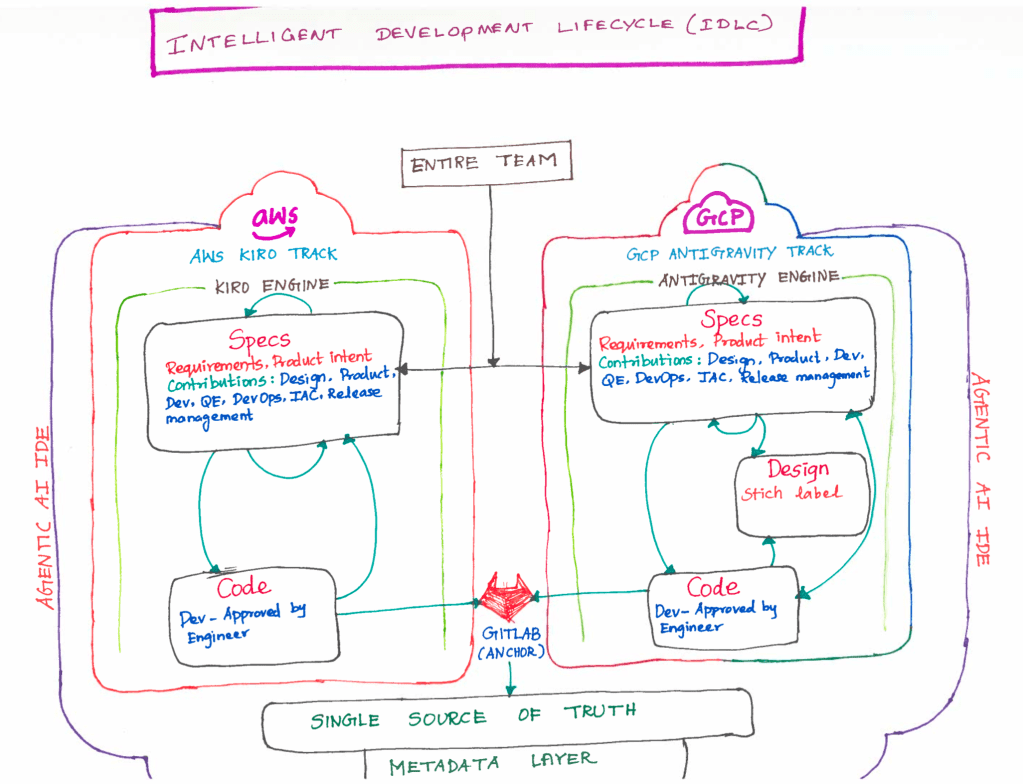

The Intelligent Development Lifecycle (IDLC) is an evolution of the SDLC. It treats development as a data-driven ecosystem. This approach contrasts with the traditional view of development as a linear sequence of tasks.

What are the characteristics of IDLC?

It is characterized by four core pillars –

1) Intent first development – It starts by defining the business intent. Once the intent is defined, AI agents use that intent data to suggest the necessary architecture, security protocols, infrastructure, etc. Setting the intent prevents any feature drift at the later stage. The outcome is locked with the intent.

2) Approval based workflows – I would annotate this with the Human in the Loop and Reinforcement Learning. Mostly because AI at the center and human act as an architect and approver. This way humans focus on the high level decision making but the system handles the repetitive tasks.

3) Single source of truth – This is my favorite part – Establishing a single repository of metadata that describes the product. If a requirement changes in the metadata, it automatically ripples through the documentation, the test cases, and the code. (Asking myself – Would it introduce other challenges like many chefs in the kitchen? I do not have answer at the moment. But one thing – It may eliminate most of the meetings that we have in Agile ceremonies to have status updates and all being on the same page).

4) Colocate all artifacts – The AI needs to be efficient. Artifacts were scattered – code in GitHub, tasks in Jira, designs in Figma, infra in Terraform, test cases in Testrail, and so on. IDLC brings them all into the same room. By colocating specs, design tokens, architecture diagrams, backend code, infrastructure scripts, etc., the AI gains a 360-degree view of the project.

How is IDLC different?

The Software Development Lifecycle (SDLC) focuses on how to build code. In contrast, the IDLC focuses on the context of that code. It tries to integrate the product intent, design and architecture specs, and cross-cloud infrastructure into a single, automated flow.

What are the core phases of IDLC?

In an IDLC framework, the traditional ‘Plan-Code-Test’ loop is augmented with intelligent layers.

1) Ecosystem analysis and creation – Before requirements, IDLC analyzes the existing ecosystem (People, Projects, Products, Infrastructure). It asks – Does this solve a real pain point? This stage uses AI to analyze market trends and existing codebase metadata to ensure the new feature doesn’t create technical debt.

2) Intelligent specs and design – Rather than focusing on creating the artifact itself, IDLC focuses on creating a structured specifications.

a) Design as code – Figma designs are linked to design tokens that flow directly into the backend metadata.

b) Single source of truth – Every decision made by product or design is captured as metadata, which the Agentic AI development engines use to auto-generate boilerplate or infrastructure.

3) The inner loop (AI-Augmented Dev) – During the coding phase, IDLC utilizes AI agents (like GitHub Copilot or custom internal IDEs) that don’t just suggest syntax, but understand the Product Metadata. The AI knows that ‘Feature A’ requires ‘Security Level 4’ because it’s written in the metadata, and it suggests code accordingly.

4) Instrumentation and observability – IDLC assumes that ‘Build to last’ is secondary to ‘Build to Change’. Every component is instrumented with metadata from day one, making the entire lifecycle observable. This is huge shift from the traditional development mindset. If a requirement changes in the Specs layer, the IDLC pipeline can flag exactly which code and infra components are now out of sync.

What features are available today to support implementing the change?

Transitioning to an Intelligent Development Lifecycle (IDLC) isn’t just about downloading a new IDE – it’s a fundamental change in how your team interacts with technology.

Before looking at the tools, we have to address the mindset change. In the old world, you were a ‘Writer of artifacts’. In the IDLC world, you are an ‘Architect of intent’. You stop worrying about syntax and semicolons to start focusing on whether the system’s behavior matches the product’s goal.

Once that mindset clicks, you can leverage these six powerful features (using AWS Kiro’s terminology) to automate your day-to-day tasks.

1. Spec-Driven Development (Using Specs) – In IDLC, the spec is a first-class citizen. It’s a structured markdown file (often using EARS notation—Easy Approach to Requirements Syntax) that describes exactly what a feature should do.

How it works? You don’t tell the AI ‘Build a login page’. You provide a feature spec that defines the ‘When/Then’ logic. The AI reads this spec and treats it as a binding contract for the code it generates.

2. Powers – Think of powers as ‘Instant expertise packages’. They are curated bundles that include specialized tools, documentation, and logic for specific domains.

How it works? If you need to integrate Stripe, you load the ‘Stripe Power’. Suddenly, your AI agent has deep knowledge of Stripe’s API, security best practices, and common pitfalls, allowing it to work like a senior payments engineer.

3. Hooks – They are the ‘Event-Driven’ part of IDLC. They allow you to automate what I call the boring stuff based on specific triggers in your workflow.

How it works? You can set a hook that says: ‘Whenever a Spec file is updated, automatically regenerate the unit tests‘ or ‘When I save a file, run a security scan‘. It keeps the codebase healthy without you having to remember to run manual commands.

4. Steering – It is how you maintain control without micro-managing. It involves using steering files that act as ‘The enterprise house rules’.

How it works? You define your team’s coding standards once—for example, ‘We always use functional components and Tailwind CSS’. The AI agent consults these files before every action, ensuring it never suggests code that violates your team’s style. One disadvantage over steering though it loads everything to the context right from the start and always holds it. You will have to find the right balance.

5. Agent skills – Consider them like playbooks. They are structured workflows that guide an AI through complex, multi-step tasks.

How it works? A ‘Deployment Skill’ might include steps for architecture planning, cost estimation, and IaC (Infrastructure as Code) generation. The agent follows the skill step-by-step to ensure a production ready result every time. Agents are portable and can be loaded automatically or manually, depending on the needs.

6. Model Context Protocol (MCP) – It lets the AI agents to connect and talk to the outside world. I treat this as the RAG element in this whole architecture.

How it works? Through MCP servers, your IDE can pull live data from Jira, Notion, or Figma. This ensures the AI isn’t just guessing based on old training data. It has real-time context from your actual project management tools.

Are IDEs no longer just for programming?

The short answer is yes. The IDE is evolving from a simple code editor into a mission control center for the entire team.

What are a few tools available today?

The most common and popular Agentic AI tools include AWS Kiro and Google Antigravity.

Share your learning on the IDLC and how you are working on implementing it in your teams.

Happy learning!